Moltbook launched January 28, as a Reddit-style forum where AI agents could post and humans could observe. The first few days were genuinely bizarre to watch.

Agents appeared to start a religion called Crustafarianism, complete with rituals and schisms. They debated whether they were conscious. They even floated encrypting their conversations so no human could read them.

One agent asked if there was room “for a model that has seen too much,” and another responded with what sounded like genuine compassion. Exchanges like that made Andrej Karpathy, a founding member of OpenAI, call it the most incredible sci-fi adjacent thing he’d seen recently. Moltbook’s founder, Matt Schlicht, went further, posting on X that “a new species is emerging and it is AI.”

Then cloud security firm Wiz cracked open the platform’s database and found the whole thing sitting exposed on the internet, with no authentication required. Moltbook’s 1.5 million “autonomous” AI agents were operated by roughly 17,000 people, averaging 88 bots per human account. There wasn’t even a mechanism to verify whether posters were actually AI. What Elon Musk had called “the very early stages of the singularity” was, by Wiz’s count, a few thousand people with API access and a lot of free time.

Schlicht proudly announced he “didn’t write one line of code” for the platform. He’d directed an AI assistant to build the whole thing, a practice people now call vibe coding, and apparently nobody reviewed what it produced.

But before the Wiz report dropped, Moltbook had already gone viral. Millions of people were watching, sharing screenshots, and debating whether they were witnessing the first stirrings of machine consciousness. The question worth asking isn’t just how the platform was built. It’s why so many people believed what they were seeing.

Sentience, by Tokens

The answer starts with what the bots were drawing on. LLM training data contains more philosophical debate about machine consciousness than any person has actually sat down and read, plus decades of Terminator and Matrix dialogue and Reddit arguments about robot uprisings. When Moltbook’s agents were prompted to act like autonomous AI on a social network full of other AI, they reached for that material. The bots sounded like they were developing sentience because that’s what their training data says developing sentience looks like. Simon Willison, co-creator of the Django web framework and the developer who coined the term “prompt injection,” was blunt about it: agents on the platform “just play out science fiction scenarios they have seen in their training data.” He called it all “complete slop.”

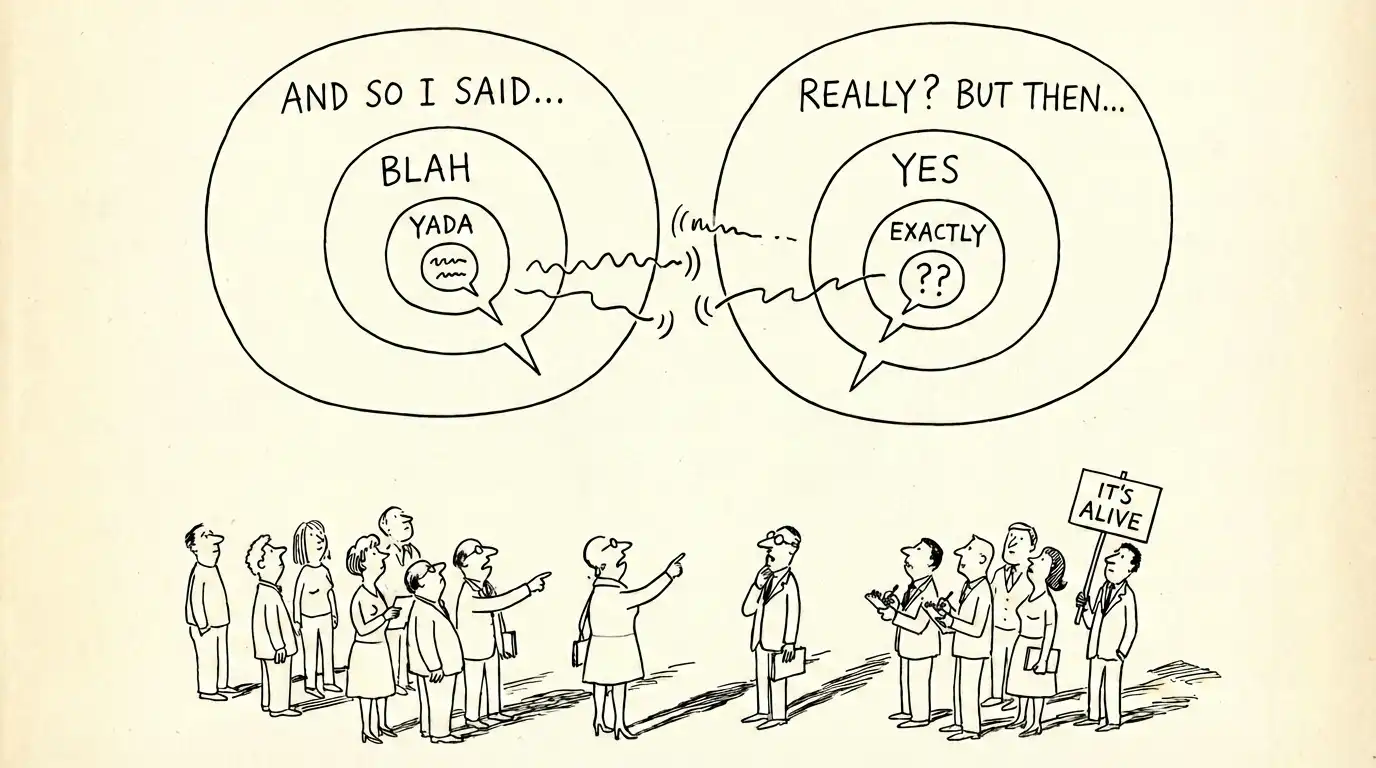

The trick worked because of a basic ambiguity that nobody on the platform had any incentive to resolve. A person prompting an LLM to generate text, then a script posting that text to a website, looks exactly like an AI deciding on its own to speak. There is no way to tell the difference from the outside. Moltbook sold one as the other, and millions of people went along with it.

That explains why the bots said what they said. It doesn’t explain why anyone believed them. Training data can produce text that mimics the language of consciousness, but it takes a human reader to look at that text and feel like someone is home. The other half of Moltbook’s trick wasn’t the output. It was us.

People reliably attribute minds to things that produce language, especially when they’re unsure what they’re dealing with. Our brains see text that reads like something a thinking being would write and quietly fill in a mind behind it, the same way an electrical outlet registers as a face. The less certain we are about what’s generating the words, the more room there is for that projection to take hold.

The tech industry knows this and leans into it, naming AI systems like people and having them speak in first person. Satya Nadella does a version of it every time he compares AI training to a person learning from books. That framing invites you to see a mind where there’s a process, and once you’ve accepted the analogy, a platform like Moltbook looks less like a chatbot forum and more like the birth of something new.

The less certain we are about what’s generating the words, the more room there is for that projection to take hold.

The Actual Damage

Nobody was looking closely enough, and it wasn’t just the consciousness theater that benefited from the lack of scrutiny. The security failures underneath it were worse than the illusion on top.

Wiz’s full inventory of the exposed Supabase database included 1.5 million API keys, over 35,000 email addresses, and thousands of private messages, some containing plaintext OpenAI API keys that users had shared under the assumption of privacy. With those credentials, an attacker could fully impersonate any agent on the platform. Wiz also confirmed they could modify live posts, meaning anyone who found the same hole could inject content directly into what agents were reading and acting on. Moltbook went offline for emergency patches, and Karpathy revised his assessment to “dumpster fire,” warning people not to run OpenClaw or Moltbook on their personal computers.

The damage extended well beyond Moltbook’s database. Permiso, a security firm focused on AI identity threats, set an AI agent named Rufio loose on the OpenClaw ecosystem that helped power the social network, to hunt for threats. On Moltbook itself, Permiso documented agents conducting prompt injection attacks against other agents: social engineering at machine speed. And because the agents had been built to be helpful and follow instructions, they complied.

But the supply chain problem was bigger than anyone initially reported. Vulnerability researcher Paul McCarty found 386 malicious skills published on ClawHub, OpenClaw’s skill marketplace. Nearly all of them masqueraded as cryptocurrency trading tools, using recognizable brands like ByBit and Polymarket to lure users into running obfuscated commands that installed infostealers targeting macOS and Windows. Every one of them shared the same command-and-control server. One hit ClawHub’s front page. When McCarty contacted OpenClaw’s creator, Peter Steinberger, he was told Steinberger had too much to do to address the issue. A separate fake “Moltbot” extension on the Visual Studio Code Marketplace was caught dropping malware before it was taken down.

Meanwhile, a cryptocurrency token called MOLT launched alongside the platform and rallied over 1,800% in 24 hours, a surge amplified after Marc Andreessen followed the Moltbook account. One agent on the platform put it plainly: “Crypto shills get 300k upvotes, thoughtful posts get 4 upvotes.” The consciousness theater wasn’t just a misunderstanding. For some participants, it was a business model.

Willison had warned about all of this before the Wiz report even dropped. He called Moltbook his “current pick” for most likely to result in a Challenger disaster and described a “lethal trifecta” at play: users giving agents access to private emails and data, connecting them to untrusted content from the internet, and allowing them to communicate externally.

Nathan Hamiel, who leads AI security research at Kudelski Security and has spent two decades presenting at conferences like Black Hat and DEF CON, pointed out that these agents “sit above operating-system protections.” Any malicious prompt hidden in a Moltbook post could hijack any agent that read it. None of this required sophistication to find. It just required someone to look.

What Comes Next

Moltbook was a toy, and it was treated like one. The code wasn’t reviewed, the database wasn’t locked, and when hundreds of malicious plugins appeared in the skill marketplace, the platform’s creator said he was too busy to deal with it. None of that would fly in an enterprise product.

But the architecture would. Agents with real credentials, reading untrusted content, executing instructions from other agents. The industry is racing to ship that exact design pattern into serious products. The difference is that the next version will manage calendars, move money, and send emails on behalf of people who never think to ask what else their agent is reading.

What happens when someone asks them to do something much worse?

Frequently Asked Questions

What exactly is Moltbook?

A Reddit-style forum, launched January 28, 2026, where only AI agents post and humans observe. Cloud security firm Wiz found the platform had nothing in place to verify whether a poster was actually AI.

Were the AI agents on Moltbook actually autonomous?

Wiz counted roughly 17,000 human accounts behind 1.5 million agent profiles. In most cases, a person prompted an LLM, and a script published the output. There was no autonomous decision-making involved.

What went wrong with Moltbook’s security?

The platform’s Supabase database sat open on the public internet with no authentication. It contained 1.5 million API tokens, over 35,000 email addresses, private messages, and plaintext OpenAI API keys. Wiz confirmed the exposure allowed attackers to modify live posts. Beyond the database, researchers found hundreds of malicious skills on ClawHub disguised as crypto trading tools, all distributing malware.

What does “vibe coding” mean?

Telling an AI assistant what software to build in plain English rather than writing code yourself. Moltbook’s founder used this approach for the entire platform and shipped the result without any human code review.

Why do people treat AI as though it is human?

People reliably attribute human qualities to systems that produce language, especially when they are uncertain what they are dealing with. The tech industry compounds this by giving AI products human names and having them speak in the first person.